The selected distribution is the one that makes the least claim to being informed beyond the stated prior data, that is to say the one that admits the most ignorance beyond the stated prior data. Pairing machine learning and alloys has proven to be particularly instrumental in pushing progress in a wide variety of materials, including metallic glasses, high-entropy alloys, shape-memory.

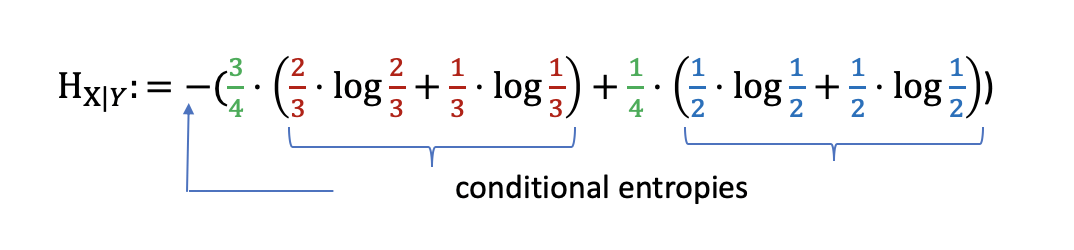

Classical cross entropy is equal to negative log-likelihood. In ordinary language, the principle of maximum entropy can be said to express a claim of epistemic modesty, or of maximum ignorance. In machine learning, this concept can be used to define a preferred sequence of attributes to investigate to most rapidly narrow down the state of X. the reduction in the entropy of X achieved by learning the state of the random variable A. In the quantum case, when the quantum cross entropy isĬonstructed from quantum data undisturbed by quantum measurements, this The expected value of the information gain is the mutual information ( ) of X and A i.e. In the classical case, minimizing cross entropy is equivalent to We define its quantum generalization, the quantum crossĮntropy, prove its lower bounds, and investigate its relation to quantumįidelity. Classical cross entropy plays a central role in Machine learning with artificial neural network (ANN)-based methods is a powerful tool for the prediction and exploitation of the subtle relationships between the composition and properties of materials. Another way of stating this: Take precisely stated prior. Download a PDF of the paper titled Quantum Cross Entropy and Maximum Likelihood Principle, by Zhou Shangnan and 1 other authors Download PDF Abstract: Quantum machine learning is an emerging field at the intersection of machine The principle of maximum entropy states that the probability distribution which best represents the current state of knowledge about a system is the one with largest entropy, in the context of precisely stated prior data (such as a proposition that expresses testable information ).

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed